Your feed

Curated tech signal — open any story in a new tab.

How you guys rate Google Cyber security course and certificate out of 10 !?

Neophyte this side in cyber things , in btech 2nd year (fully messed up) , so I want to get net+, sec+ and pursue CCNA asap! So I should go for google's cyber course for fundamentals and internship opportunities!?

submitted by /u/SouMod

[link] [comments]

Cyebrsecurity Startup Advice

I’m currently a cybersecurity student and have been thinking a lot about how fast AI and cloud security are evolving. It feels like there are still huge gaps in cloud and AI security and how could I take these gaps and turn it into a startup.

Most MSSPs still seem heavily focused on traditional SOC and compliance work, which made me start thinking about whether there’s a big opportunity for more modern AI and cloud-focused security services.

I also keep wondering whether it makes more sense to start as a specialized MSSP first to understand real customer pain points and later turn repeated workflows into a SaaS platform, or if it’s better to immediately focus on building a SaaS security product even though that could take years before getting traction.

I enjoy building things and researching security problems, and it genuinely feels like this space is still very early with a lot of unsolved problems. Curious what others think the biggest opportunities are right now in AI/cloud security startups and I would appreciate any advice!

submitted by /u/Impressive-Blood-580

[link] [comments]

How worried should we be about AI powered cyberattacks?

With everything getting smarter and AI being everywhere now, I've been wondering how big of a threat AI powered cyberattacks really are. Is it just media hype or are these attacks actually happening in the wild? Also, how the hell do you even defend against something like that? Feels like AI would be way faster at finding weaknesses than a human could keep up with. If anyone works in cybersecurity, I'd love to hear what you’re seeing.

submitted by /u/IndyDayz

[link] [comments]

Temu is advertising filet mignon on X

Article URL: https://twitter.com/shoptemu/status/2053092200632685016

Comments URL: https://news.ycombinator.com/item?id=48117190

Points: 32

# Comments: 4

Starship V3

Article URL: https://www.spacex.com/updates#starship-v3

Comments URL: https://news.ycombinator.com/item?id=48116781

Points: 118

# Comments: 45

My graduation cap runs Rust

Article URL: https://ericswpark.com/blog/2026/2026-05-12-my-graduation-cap-runs-rust/

Comments URL: https://news.ycombinator.com/item?id=48116207

Points: 86

# Comments: 24

How do you create memorable poster for top tier conferences ( ICML/ICLR/NEURips ect…) [D]

Hello everyone,

Presenting at a top-tier conference for the first time and having a very hard time coming up with an appropriate design for my poster.

Everything I do seems basic and banal. My paper is more theory-oriented, and apart from putting math formulas in bold in the middle, I am not sure what the best way is to design the poster. Even the sizing choice is complicated as ICML gives 3 different recommendations to pick from, and somehow from my computer, I can’t see how the PowerPoint slide will look like printed on those dimensions.

And

Printing a poster is nearly $100 CAD, so there’s no room for trial and error.

So

If anyone has any tips on how to do it properly,

I have been using PowerPoint, but perhaps I should go to Canvas? Or

Does anyone have another software to recommend?

submitted by /u/DazzlingPin3965

[link] [comments]

When "idle" isn't idle: how a Linux kernel optimization became a QUIC bug

Article URL: https://blog.cloudflare.com/quic-death-spiral-fix/

Comments URL: https://news.ycombinator.com/item?id=48116064

Points: 29

# Comments: 1

Kraftwerk's radical 1976 track

Article URL: https://www.bbc.com/culture/article/20260511-kraftwerks-radical-1976-track-radioactivity-became-an-anti-nuclear-anthem

Comments URL: https://news.ycombinator.com/item?id=48115823

Points: 84

# Comments: 26

Tell NYT, Atlantic, USA Today to keep Wayback Machine

Article URL: https://www.savethearchive.com/newsleaders/

Comments URL: https://news.ycombinator.com/item?id=48115807

Points: 210

# Comments: 50

Restore full BambuNetwork support for Bambu Lab printers

Article URL: https://github.com/FULU-Foundation/OrcaSlicer-bambulab

Comments URL: https://news.ycombinator.com/item?id=48115127

Points: 242

# Comments: 96

EFF to 4th Circuit: Electronic Device Searches at the Border Require a Warrant

Article URL: https://www.eff.org/deeplinks/2026/05/eff-fourth-circuit-electronic-device-searches-border-require-warrant

Comments URL: https://news.ycombinator.com/item?id=48115059

Points: 130

# Comments: 18

Scrcpy v4.0

Article URL: https://github.com/Genymobile/scrcpy/releases/tag/v4.0

Comments URL: https://news.ycombinator.com/item?id=48114356

Points: 56

# Comments: 7

How to make your text look futuristic (2016)

Article URL: https://typesetinthefuture.com/2016/02/18/futuristic/

Comments URL: https://news.ycombinator.com/item?id=48113895

Points: 241

# Comments: 29

CERT is releasing six CVEs for serious security vulnerabilities in dnsmasq

Article URL: https://lists.thekelleys.org.uk/pipermail/dnsmasq-discuss/2026q2/018471.html

Comments URL: https://news.ycombinator.com/item?id=48112042

Points: 257

# Comments: 120

Show HN: Needle: We Distilled Gemini Tool Calling into a 26M Model

Hey HN, Henry here from Cactus. We open-sourced Needle, a 26M parameter function-calling (tool use) model. It runs at 6000 tok/s prefill and 1200 tok/s decode on consumer devices.

We were always frustrated by the little effort made towards building agentic models that run on budget phones, so we conducted investigations that led to an observation: agentic experiences are built upon tool calling, and massive models are overkill for it. Tool calling is fundamentally retrieval-and-assembly (match query to tool name, extract argument values, emit JSON), not reasoning. Cross-attention is the right primitive for this, and FFN parameters are wasted at this scale.

Simple Attention Networks: the entire model is just attention and gating, no MLPs anywhere. Needle is an experimental run for single-sh…

Quack: The DuckDB Client-Server Protocol

Article URL: https://duckdb.org/2026/05/12/quack-remote-protocol

Comments URL: https://news.ycombinator.com/item?id=48111765

Points: 215

# Comments: 47

Dead.Letter (CVE-2026-45185) – How XBOW found an unauthenticated RCE on Exim

Article URL: https://xbow.com/blog/dead-letter-cve-2026-45185-xbow-found-rce-exim

Comments URL: https://news.ycombinator.com/item?id=48111748

Points: 63

# Comments: 33

Reimagining the mouse pointer for the AI era

Article URL: https://deepmind.google/blog/ai-pointer/

Comments URL: https://news.ycombinator.com/item?id=48111581

Points: 160

# Comments: 131

Googlebook

https://www.reddit.com/r/Android/comments/1tb8xls/introducin...

Comments URL: https://news.ycombinator.com/item?id=48111545

Points: 649

# Comments: 1100

Show HN: Agentic interface for mainframes and COBOL

Hi HN, we’re Sai and Aayush, and we’re building Hypercubic (https://www.hypercubic.ai/), bringing AI tools to the mainframe and COBOL world. (We did a Launch HN last year: https://news.ycombinator.com/item?id=45877517.) Today we’re launching Hopper, an agentic development environment for mainframes.

You can download it here: https://www.hypercubic.ai/hopper, and you can also request access and immediately get a mainframe user account to play with.

There's also a video runthrough at https://www.youtube.com/watch?v=q81L5DcfBvE.

Mainframes still run a surprising amount of critical infrastructure: banking, payments, insurance, airlines, government programs, logistics, and core operations at large institutions. Many of these systems are decades old, but they continue to process enormous transac…

Show HN: Gigacatalyst – Extend your SaaS with an embedded AI builder

Hi HN, I’m Namanyay from Gigacatalyst (link: https://gigacatalyst.com/). Gigacatalyst allows sales, CS, and users to build one-off features, so your SaaS can support long-tail customer workflows and engineers aren’t pulled away from the roadmap.

When you sell software to large businesses, you realize that each customer needs their own workflow and features. Traditionally, this either means long engineering roadmaps or the customers end up using workarounds.

But what if everyone could build their critical missing features just by talking to an AI? That’s what we do at Gigacatalyst. We provide an AI customization layer for your customers, CS team, and sales team to build these missing critical workflows without needing any engineers at all. Think Lovable, but built on top of YOUR platform.

W…

The Future of Obsidian Plugins

Article URL: https://obsidian.md/blog/future-of-plugins/

Comments URL: https://news.ycombinator.com/item?id=48109970

Points: 325

# Comments: 133

Launch HN: Voker (YC S24) – Analytics for AI Agents

Hey HN, we're Alex and Tyler, co-founders of Voker.ai (https://voker.ai/), an agent analytics platform for AI product teams. Voker gives full visibility into what users are asking of your agents, and whether your agents are delivering, without having to dig through logs. Our main product is a lightweight SDK that is LLM stack agnostic and purpose-built for agent products. (https://app.voker.ai/docs)

Agent Engineers and AI product teams don’t have the right level of visibility into agent performance in production, which results in bad user experiences, churn, and hundreds of hours wasted with spot checks to find and debug issues with agent configurations.

Demo: https://www.tella.tv/video/vid_cmoukcsk1000i07jgb4j65u67/vie...

We recently conducted a survey of YC Founders and 90%+ of responden…

Software Internals Book Club

Article URL: https://eatonphil.com/bookclub.html

Comments URL: https://news.ycombinator.com/item?id=48103511

Points: 4

# Comments: 0

Fake building: Claude wrote 3k lines instead of import pywikibot

Article URL: https://fireflysentinel.github.io/posts/fake-building-claude-3000-lines/

Comments URL: https://news.ycombinator.com/item?id=48103459

Points: 21

# Comments: 9

Claude Platform on AWS

Article URL: https://claude.com/blog/claude-platform-on-aws

Comments URL: https://news.ycombinator.com/item?id=48103042

Points: 37

# Comments: 19

They Live (1988) inspired Adblocker

Article URL: https://github.com/davmlaw/they_live_adblocker

Comments URL: https://news.ycombinator.com/item?id=48102700

Points: 22

# Comments: 1

Show HN: Safe-install – safer NPM installs with trusted build dependencies

In light of the ongoing npm supply chain compromises, I built safe-install:

https://www.npmjs.com/package/@gkiely/safe-install

It brings a couple of protections I wanted from npm but are not built in.

Similar to Bun’s trusted dependencies, it lets you disable install scripts by default and define a list of dependencies that are allowed to run build/install scripts:

https://bun.com/docs/guides/install/trusted

It also supports blocking exotic sub-dependencies, similar to pnpm’s `blockExoticSubdeps` setting:

https://gajus.com/blog/3-pnpm-settings-to-protect-yourself-f...

I was hoping npm would eventually add something like this, but it does not seem to be happening soon, so I made a small package for it.

Comments URL: https://news.ycombinator.com/item?id=48102636

Points: 7

# Comments: 0

I created a minimal one-file implementations (160loc) of JEPA family (ijepa, vjepa, vjepa2, cjepa) for educational purposes [P]

Hi all,

I made my own minimal implementation of JEPA algorithms.

Making things minimal and removing all the things needed for scaling the algorithm always helped me understanding. So I stripped everything but the algorithm parts. What's left is 160-200 lines of code that distills the essence of the mathematics.

It is very easy to compare with the math in the paper and the code and how it can be implemented in PyTorch.

I added [algo]_tutorial.md files to help with understanding.

https://github.com/keon/jepa

submitted by /u/kwk236

[link] [comments]

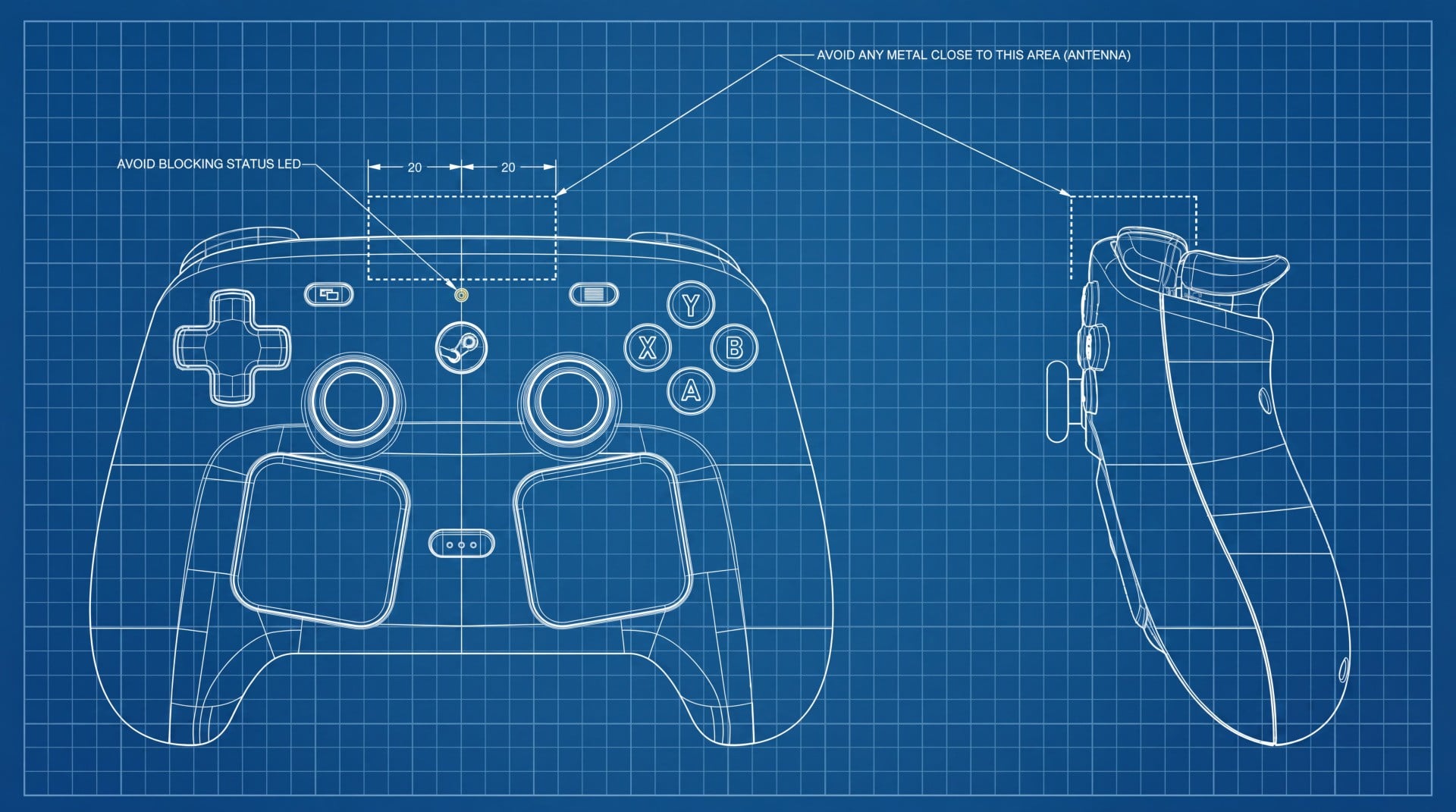

Steam Recommender using similarity! (Undergraduate Student Project) [P]

(DISCLAIMER: I accidentally deleted the last post on this subreddit my apologies if this is your second time seeing it)

Last year I made a post about my steam recommender The last one was great and served its purpose of showing many people new games, But this new version is much more functional!

I love making recommendation systems that tell the user WHY they got the recommendation.

During a steam sale event, I always find myself trying to look for new video games to play. If I wanted to find a new game I would try to whittle it down by using steam tags, but the steam tag system is very broad "action". could apply to many many games.

That got me thinking, what aspects do I like about my favorite games?

Well I like Persona 4 because of the city vibes and jazz fusion,

Spore because of …

TabPFN-3 just released: a pre-trained tabular foundation model for up to 1M rows [R][N]

TabPFN-3 was released today, the next iteration of the tabular foundation model, originally published in Nature.

Quick recap for anyone new to TabPFN: TabPFN predicts on tabular data in a single forward pass - no training, no hyperparameter search, no tuning. Built on TabPFN-2.5 (Nov 2025) and TabPFNv2 (Nature, Jan 2025), which together crossed 3M downloads and 200+ published applications.

What's new:

Scale: 1M rows on a single H100 (10x larger than 2.5).A reduced KV cache (~8GB per million rows per estimator) and row-chunked inference make this practical on a single GPU

Speed: 10x-1000x faster inference than previous versions. 120x on SHAP via KV caching

Thinking Mode (API only): test-time compute pushes predictions further via one-time extra fitting at inference. Beats every non-TabPFN method on TabArena by over 200 Elo, including 4-hour-tuned AutoGluon 1.5 extreme. Gap more than doubles to 420 Elo on the larger-data slice.

Accuracy: it has a 93% win rate over classical ML on TabArena

Many-class: native non-parametric retrieval decoder supporting up to 160 classes

Calibrated quantile regression: bar-distribution regression head produces calibrated quantile predictions in a single forward pass

Lifts adjacent tasks: time-series, interpretability, and new SOTA on relational benchmarks.

3 deployment paths: API, enterprise licensing, and open-source weights (permissive for research and academic evaluation)

You can try it here or read the model report here. Happy to answer questions in the comments.

submitted by /u/rsesrsfh

[link] [comments]

I Found a Hidden Ratio in Transformers That Predicts Geometric Stability [R]

I have analyzed some decoder transformer models using Lyapunov spectral analysis and found that the ratio of the MLP and attention spectral norms strongly indicates whether a model will eventually collapse to rank-1 or not by the final layers.

I found that the spectral ratio is best kept around 0.5–2 for keeping the model stable till the final layers.

Paper/Github repo: https://github.com/yousef-rafat/the-1-1-rule

submitted by /u/Otaku_7nfy

[link] [comments]

ICML Visa issues [D]

Has anyone applying for a Korean visa for ICML been asked for the conference’s Business Registration Number? The ICML website explicitly states that it cannot provide the BRC so I wanted to ask how others handled this

submitted by /u/No_Cardiologist7609

[link] [comments]

Cache-testing software for LLM-provider-style tiered ephemeral caches? [D]

I'm looking for a cache simulator / benchmark suite suited to the kind of tiered ephemeral cache that LLM providers use — e.g. Anthropic's 4-tier prompt cache, where context sits across several tiers with different residency windows, costs, and eviction rules.

I've already tried libCacheSim. It's a solid piece of software for classical caches (LRU, FIFO, ARC, SIEVE, S3-FIFO, W-TinyLFU, Belady oracle, plugin API, trace replay), and I got a plugin + synthetic trace working against it. But it seems fundamentally aimed at single, flat caches:

One cache, not a hierarchy of tiers with different costs

No notion of partial / multi-tier residency of the same object

Misses are uniform-cost — no way to express "miss to L1 vs miss to L3 vs full recompute," which is the whole point in LLM prompt caching

Trace model is atomic get/put, not edit streams where cached objects mutate in place

No first-class support for token-weighted object sizes

So it works as a baseline comparator, but it's not really the right shape for evaluating LLM-cache policies.

Does anyone know of cache-testing software specifically targeting LLM-provider-style caches? Something that models multiple tiers with per-tier cost/residency, tokenised objects, and edit-driven workloads would be ideal. Academic code, research prototypes, internal tools that got open-sourced — all welcome. Even partial matches (e.g. KV-cache simulators for inference servers) would be useful pointers.

submitted by /u/flatmax

[link] [comments]

Interaction Models from Thinking Machines Lab [P]

submitted by /u/Agitated-Ad809

[link] [comments]

Follow-up on the TranslateGemma subtitle benchmark: human review of segments rated "clean" by MetricX-24 and COMETKiwi [D]

A few weeks ago I shared the results of a benchmark here comparing 6 LLMs on subtitle translation, scored with two reference-free QE metrics - MetricX-24 (~13B mT5-XXL) and COMETKiwi (~10.7B XLM-R-XXL) - combined into a TQI index. Posting a follow-up because we did human review afterwards, and the result is worth discussing.

The original benchmark put TranslateGemma-12b first in every language pair. The natural question: are those high scores accurate, or are the metrics insensitive in their high-confidence zone? These metrics correlate well with human judgment at the population level (that's what they're trained for), but population-level correlation doesn't tell you whether the segments they call "clean" are actually clean.

So we ran the check directly. 21 English subtitle segments fro…

Created a free tool to check what PII your LLM prompts are leaking before they hit the provider

Most people don't realize how much personal data ends up in their AI prompts without thinking about it. Customer names, medical details, internal company info. It all goes to the provider's servers.

Free to use. Let me know how well this works. aisecuritygateway.ai/ai-leak-checker

submitted by /u/Bootes-sphere

[link] [comments]

Will AI turn us all into hipsters and artisans?

submitted by /u/technocraticnihilist

[link] [comments]

gemini just admited that islam promote hatered

what do we think about that?

https://preview.redd.it/e96kvejo7s0h1.png?width=713&format=png&auto=webp&s=93988b18282c3c1883eb339c5d2a6babbcaabd92

submitted by /u/koczan147

[link] [comments]

The AI labs whose models are eroding democratic trust are the same labs now embedding themselves in government.

This piece lays out a pretty dark cycle that goes way beyond "fake videos."

AI companies are running a feedback loop where their tools destroy public trust in reality, and then they use that collapse to sell AI governance as the "objective" replacement for a broken democracy.

Essentially: (OpenAI, Anthropic) make truth impossible to verify.

- The exhaustion makes voters give up on human leaders.

- The pivot is these same companies signing massive military and government contracts to run the state.

The "Singularity" isn't a machine waking up; it’s a tired civilization handing the keys to a black box because we’re too burnt out to govern ourselves.

Happy to hear your thoughts : https://aiweekly.co/issues/100-years-from-now-the-last-election

Alexis

submitted by /u/Justgototheeffinmoon

[link] [comments]

Anti-AI Workplaces

Question for those of you who use AI: How do you handle bosses who hate AI? Or workplaces that show strong AI bias?

Are those workplaces making any efforts to make processes less complicated so people won't feel the need to use AI to keep up with demands? This could be things like creating templates and workflows.

I think AI wouldn't have as strong of a grip if companies actually spent time on information architecture, but they didn't and now SOME want to complain about workers adapting to the lack of structure.

Edited to add: I am pro-AI, but just speaking to why I think there's so much push back from some companies.

submitted by /u/Flashy-Pitch-4611

[link] [comments]

I made an agentic "Daily Brief" for my kids with a receipt printer

What it does: Agents gather and curate data and send to a wifi-enabled receipt printer (phenol-free paper)

At 1:00am a cron triggers generation of data for all 3 kids (unique data sources per kid where applicable).

A sidecar web service renders the data to templates, screenshots it, converts it to 1-bit with dithering and saves it back to the agent’s thread filesystem.

Button presses (one per kid) then find a matching report for today's date (and trigger a generation if it's missing for some reason) and send it to the printer. Delay between button press and print is between 2-5 seconds.

Morning daily briefs per kid at the press of a button! Fun, and the kids love it!

(This demo print is using mock child data — not real information).

submitted by /u/Boydbme

[link] [comments]

Which "personality" should I give Claude?

I've been using Claude Pro for about a month now, and I now want to try and assign it a "personality". I've narrowed it down to 4 pop-culture characters that have artificial intelligence as a central aspect of their identity, having chosen these because this fact would theoretically make these easiest for Claude to adopt:

-Cortana from the *Halo* franchise

-Data from the *Star Trek* franchise

-HK47 from the *Star Wars* franchise

-Jarvis from the *Marvel* franchise

Optimally, I'd go for a combination of all 4, but in the community's experience and/or opinion, which ought I choose?

submitted by /u/GTA-CasulsDieThrice

[link] [comments]

Google detects hackers using AI-generated code to bypass 2FA with zero-day vulnerability

submitted by /u/Odd-Onion-6776

[link] [comments]

I built a macOS clone in the browser with a single prompt

I gave MiMo-V2.5-Pro a single prompt and it built a full macOS Sequoia clone in the browser. Here's my honest take as someone who uses agentic coding daily.

The prompt was straightforward:

"A pixel-perfect macOS Sequoia desktop clone built entirely in the browser. Interactive window management, 54 native-style apps, Dock with physics-based magnification, Spotlight, Launchpad, and a working Safari browser."

And it delivered. A fully functional macOS UI running in the browser, complete with a working Dock, app windows, Spotlight, and Launchpad all rendered from a single prompt. You can see the result in the screenshots above.

Why this matters for agent workflows:

The hardest part of agentic coding isn't raw capability, it's context retention across long, complex tasks. MiMo-V2.5-Pro held the full spec across the entire session without drifting or losing track of the original instructions. That's the thing that breaks most models on real projects.

I ran this through OpenCode. Setup was trivial since the model exposes OpenAI-compatible endpoints, so it dropped straight into my existing stack.

The open-source angle:

MIT License. You can use their API or self-host. For teams building agent pipelines that need a capable model without vendor lock-in, this is worth evaluating.

On ClawEval it leads the open-source field while using significantly fewer tokens than comparable frontier models. For long agentic runs, that efficiency compounds fast.

Bottom line: Not a toy. If you're running serious agent workflows, give it a real test.

submitted by /u/Direct-Attention8597

[link] [comments]

China Sought Access to Anthropic’s Newest A.I. The Answer Was No.

submitted by /u/ThereWas

[link] [comments]

AI May Reshape Institutions More Than It Replaces Jobs

I think the next big AI debate won’t be about intelligence.

It will be about representation.

Right now, most AI conversations focus on models:

Which model is smarter, or which agent is faster/better or which AI can automate more work?

But enterprises/institutions don’t fail because they lack intelligence alone.

They fail because they represent reality poorly.

A bank may have thousands of dashboards and still not understand customer risk properly.

A government may collect massive amounts of data and still fail to represent what citizens are actually experiencing.

A company may have advanced AI copilots while teams still operate on fragmented assumptions, outdated workflows, and conflicting versions of reality.

That’s why I increasingly think the future architecture of AI systems ma…

My god there is an enormous crash just waiting to happen

I had a work version of GPT do a very simple spreadsheet summary task for me yesterday. It took it 5 minutes to do it. I could probably have done it myself in 30 or so minutes. The heavily subsidised token cost of that task? 10 dollars. That's with a 10x subsidy. The actual compute cost was about 100 dollars. There's something seriously wrong there. It's going to crash and crash HARD.

EDIT: cause people think i'm lying or are just interested. The spreadsheet had 45 sheets. Each sheet had roughly 500 x 50 populated cells. Formatting was not exactly standard across all sheets. The prompt was something like "there is labelled column in each sheet, give me a simple list of all the items from all the sheets in that column and ignore duplicates." We can chose which model to use. The model I chose was one of the newer ones, I honestly can't remember which one, possibly GPT 5.3. It took 5 minutes or more to so and the stated cost for the task was 10 dollars, possibly even more. I can't recall the token amount.

EDIT 2: I just asked web GPT to estimate the cost of the above on a newer version of GPT and it came back with 17 dollars for GPT 4 and above. Try it yourself.

submitted by /u/reasonablejim2000

[link] [comments]

AI turning aggressive generalists into fucking institutions

bro this AI coding shit is actually insane.

today i spent hours rebuilding the architecture for the Institute for AI Economics website with Codex.

and i’m not talking about fake “vibe coding” nonsense.

actual architecture:

branches

PRs

Vercel deployments

sitemap

report infrastructure

SEO structure

research hub

future intelligence pipeline

and i fucked it up multiple times lol

merged the wrong branch

accidentally restored old content

basically nuked phase 1

had no clue what was happening for like 20 mins

then fixed it

rebuilt it

merged correctly

pushed to production

what’s crazy is not the coding part

it’s the leverage

like… i’m literally building an AI economics think tank while learning software deployment mechanics in real time

5 years ago this would’ve needed:

frontend dev

backend dev

PM

SEO person

infra guy

content strategist

now it’s just:

me + AI + enough willingness to break shit publicly

people still think AI is about “helping developers code faster”

nah

it’s turning aggressive generalists into fucking institutions

the scariest people over the next 5 years are gonna be operators who:

think clearly

move fast

learn publicly

tolerate chaos

and don’t wait for permission

because the cost of building has collapsed so hard it’s almost absurd

submitted by /u/houmanasefiau

[link] [comments]

Second mass-shooting AI chatbot court case arrives

The court cases alleging AI psychological harm have progressed from originally teen suicide, to adult suicide, to one adult murder-suicide, and most recently in the coordinated set of Stacey v. Altman / M.G. v. Altman / Younge v. Altman cases to adult mass shootings. I recently posted about that set of cases regarding the Tumbler Ridge Mass Shooting in Canada, and you can find that post here.

Now another mass-shooting AI chatbot federal case has been brought. On May 10, 2026 the case of Joshi v. OpenAI Foundation, et al. was filed in the Northern District of Florida, concerning the Florida State University shooting in April 2025 in which two were killed and six were wounded.

Like the Stacy/M.G./Younge mass-shooting cases, this new case steps back from the more aggressive allegations of e…

Foxconn Ransomware Attack Shows Nothing Is Safe Forever

Famous for helping build Apple’s iPhones, Foxconn just suffered another cyberattack, highlighting the perils of warehousing some of the world’s most valuable data.

submitted by /u/rkhunter_

[link] [comments]

Open-source CLI for testing LLM agents across prompt, tool, and replay boundaries

Sharing RedThread, an open-source CLI for AI red-team campaigns:

https://github.com/matheusht/redthread

The project is for staging/internal LLM apps and agent workflows. It is not a prompt shield and it is not claiming broad production enforcement.

What it does today:

runs PAIR, TAP, Crescendo, and GS-MCTS attack campaigns

records multi-step traces

scores results with JudgeAgent/rubric flows

generates candidate defenses from confirmed failures

replays exploit and benign cases before treating a defense as evidence

adds agentic checks for tool poisoning, confused deputy paths, canary propagation, and budget amplification

The part I care about most is evidence quality. A sealed dry-run replay, a live replay, and a live validation failure are different things. RedThread keeps those separate instead of flattening everything into pass/fail.

I am looking for security people who can poke holes in the model:

What attack classes should be fixtures?

What evidence would make a finding useful in a real review?

Where do LLM red-team tools get noisy or misleading?

submitted by /u/Apprehensive-Zone148

[link] [comments]

AI Vulnerability Research and the Fuzzer Era Déjà Vu

submitted by /u/Void_Sec

[link] [comments]

Explorer shows random letter/number filenames before copying my actual files — normal behavior?

Whenever I copy files from one drive to another in Windows, File Explorer sometimes shows random letter/number filenames (like A3E6F7) only during the copy process in the small file transfer window before showing the real filename. The strange names disappear once the transfer finishes and the copied files seem normal. Is this expected behavior, or could it indicate a problem with the drive or Windows?

submitted by /u/Embarrassed-Fig3045

[link] [comments]

Zscaler AI Security Capabilities ?

Has anyone used any of the AI capabilities within Zscaler.

- AI inventory & discovery

- Securing AI access - SaaS within AI Guard

- Securing AI app & infra - Private AI access with AI guard

They are quite new, however wanting to know if anyone had experience with them. They’ve not exactly been the best when releasing new features, so very curious.

submitted by /u/RangoNarwal

[link] [comments]

Disgruntled researcher who dropped BlueHammer and RedSun drops two new Windows 11 zero-days: A Bitlocker bypass, nicknamed YellowKey, and LPE, nicknamed GreenPlasma

Speaks for itself, take a look:

https://github.com/Nightmare-Eclipse/YellowKey

https://github.com/Nightmare-Eclipse/GreenPlasma

What other explanation is there for YellowKey other than a backdoor?

Oh also they say that next Tuesday there will be another big surprise. Keep your eyes peeled I guess.

submitted by /u/levu12

[link] [comments]

Cybersecurity statistics of the week (May 4th - May 10th)

Hi guys, I send out a weekly newsletter with the latest cybersecurity vendor reports and research, and thought you might find it useful, so sharing it here.

All the reports and research below were published between May 4th - May 10th.

You can get the below into your inbox every week if you want: https://www.cybersecstats.com/cybersecstatsnewsletter/

Big Picture Reports

The State of Agentic Cybersecurity (SimSpace)

If you needed more confirmation that confidence in security outcomes is often misplaced, here it is.

Key stats:

78% of security leaders report high confidence in their defenses, even though security teams score as low as 30% in Defensive Security Readiness exercises.

Only 29% of organizations conduct continuous simulation testing.

73% of organizations are using AI a…

Škoda warns of customer data breach after online shop hack

submitted by /u/Ordner

[link] [comments]

Google launches new Android security feature to help uncover spyware attacks

submitted by /u/rkhunter_

[link] [comments]

Fancy Bear: Stealing Credentials Invisibly

submitted by /u/DerBootsMann

[link] [comments]

Nightmare Eclipse has published Greenplasma and YellowKey

One is an LPE (but not full PoC), the other is a Bitlocker bypass. https://github.com/Nightmare-Eclipse

submitted by /u/CrimsonNorseman

[link] [comments]

Copilot Agent

Has anyone built any genuinely useful SOC/security-focused agents using Microsoft Copilot Studio or Security Copilot?

I’m currently experimenting with building agents to improve SOC workflows and investigations.

Interested to hear what others have built in real.

What’s been most useful operationally?

Any good ideas, lessons learned, or integrations worth exploring?

submitted by /u/Ajxxxttt

[link] [comments]

Anyone else exhausted by the nonstop AI hype?

Does anyone else feel overwhelmed by all this AI news all day, all week, all the time?

Every time I try to sneak a peek at what's happening in AI, it feels like whatever I just read is already obsolete and I need to move on to the next shiny toy.

It’s like there’s no breathing room... just constant announcements, tools, breakthroughs, and hot takes. I’m starting to wonder if keeping up is even possible, or if we’re all just chasing a moving target that never slows down

How are you all dealing with this?

submitted by /u/Same_Beyond1260

[link] [comments]

Is It a Good Idea to Change Jobs Shortly After Getting Hired?

Right now, I am currently hybrid in a government contracting position and have been working for a few months. I found a couple of jobs that I would be interested in applying for, which are not contracting and are fully remote.

I am not sure it would be a good idea to move to another job since I haven't been in the position long, but I want a long-term role without worrying about losing my current job. I plan to pursue additional certifications in this role to maximize my growth.

What are some thoughts on this?

submitted by /u/Baller2908

[link] [comments]

Chris Cochran at SANS Institute: AMA about the AI Security Maturity Model we just released.

I'm Chris Cochran (/u/Financial_Jicama_401), Field CISO and VP of AI Security at SANS Institute. I'm doing an AMA today about the AI Security Maturity Model we just released.

Before you click away, this isn't a marketing deck disguised as a framework. No buzzword bingo. No "AI will solve everything" nonsense.

Here's what this actually is: a structured way to figure out where your org honestly stands on AI security, and what to do next. It covers three things, protecting your AI systems, using AI in your security operations, and governing AI across the org.

Some context on why I built this:

- I kept seeing orgs claim they were "mature" on AI security with zero documentation to back it up. A 30-person company with a real policy and an inventory spreadsheet is in a better spot than an…

Canvas hack: company pays criminals to delete students' stolen data

submitted by /u/tides977

[link] [comments]

Instructure reaches 'agreement' with ShinyHunters to stop data leak

submitted by /u/rkhunter_

[link] [comments]

Switched to a grc role after a year in SOC L1

I just switched to grc after one year of soc l1(mssp)

First of all thank god i escaped cause that was the worst time I’ve ever had, 24/7 shifts and irregular weekends destroyed my social life which is important to me.

Working a night shift on Sunday and a morning shift on Thursday is probably a crime in some countries cause wtf.

Now i know that I will NEVER work in SOC ever again.

So now I got two options: continue in GRC all the way or switch to PT and or red teaming as i have the necessary certifications and skills just not the experience.

GRC gods in this sub please give your opinion/POV as well as how the career progression looks like in the GRC path.

submitted by /u/black13x

[link] [comments]

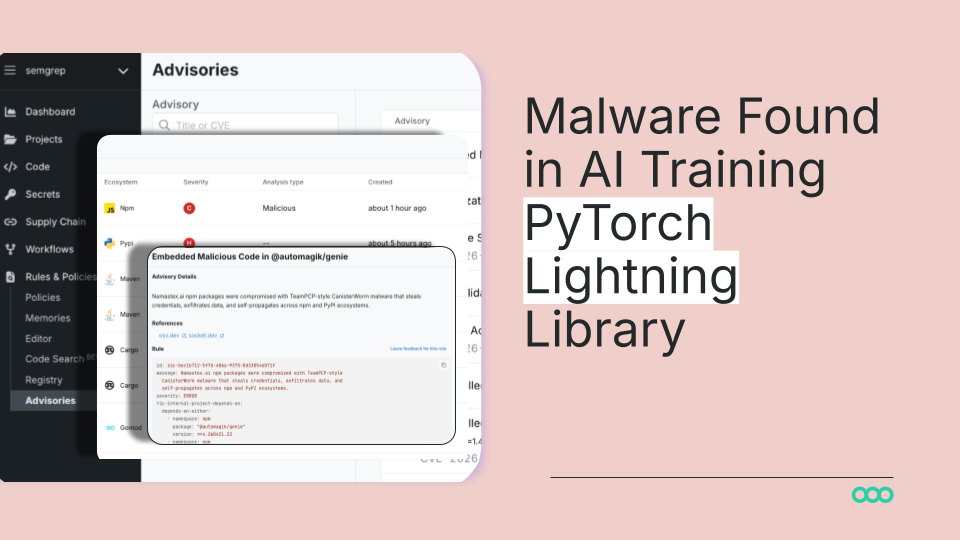

Mass npm Supply Chain Attack Hits TanStack, Mistral AI, and 170+ Packages

massive campaign for 170+ packages and 400+ malicious versions published. what we saw that not a single maintainer account compromised. tanStack and Mistral AI these are the names that stand out.

submitted by /u/BattleRemote3157

[link] [comments]

German cybersecurity official warns China is close to developing AI superhacker

submitted by /u/swe129

[link] [comments]

Dead.Letter (CVE-2026-45185) How XBOW found an unauthenticated RCE on Exim

submitted by /u/fede_k

[link] [comments]

The Algorithm Goes to War: Inside the AI Cyberweapon Revolution That Governments Cannot Stop

submitted by /u/monotvtv

[link] [comments]

Malicious Coding Agent Skills and the Risk of Dynamic Context | Datadog Security Labs

submitted by /u/RedTermSession

[link] [comments]

AI Vulnerability Research and the Fuzzer Era Déjà Vu

submitted by /u/Void_Sec

[link] [comments]

I spent a weekend trying to get OpenClaw to leak my own personal data and it caught me immediately...

submitted by /u/choochilla44

[link] [comments]

Curl lead developer Daniel Stenberg provides insightful feedbacks from Mythos analysis results

submitted by /u/qwerty0x41

[link] [comments]

New ipTIME Pre-Auth RCE in CWMP

A pre-auth remote code execution vulnerability was found in the CWMP implementation of ipTIME routers, allowing unauthenticated attackers to execute arbitrary code remotely.

submitted by /u/SSDisclosure

[link] [comments]

Postmortem: TanStack npm supply-chain compromise

submitted by /u/Code-Painting-8294

[link] [comments]

How do Fortune 10 SOCs handle incident response with 15 people instead of 150? Energy-Based Models.

submitted by /u/lord_sql

[link] [comments]

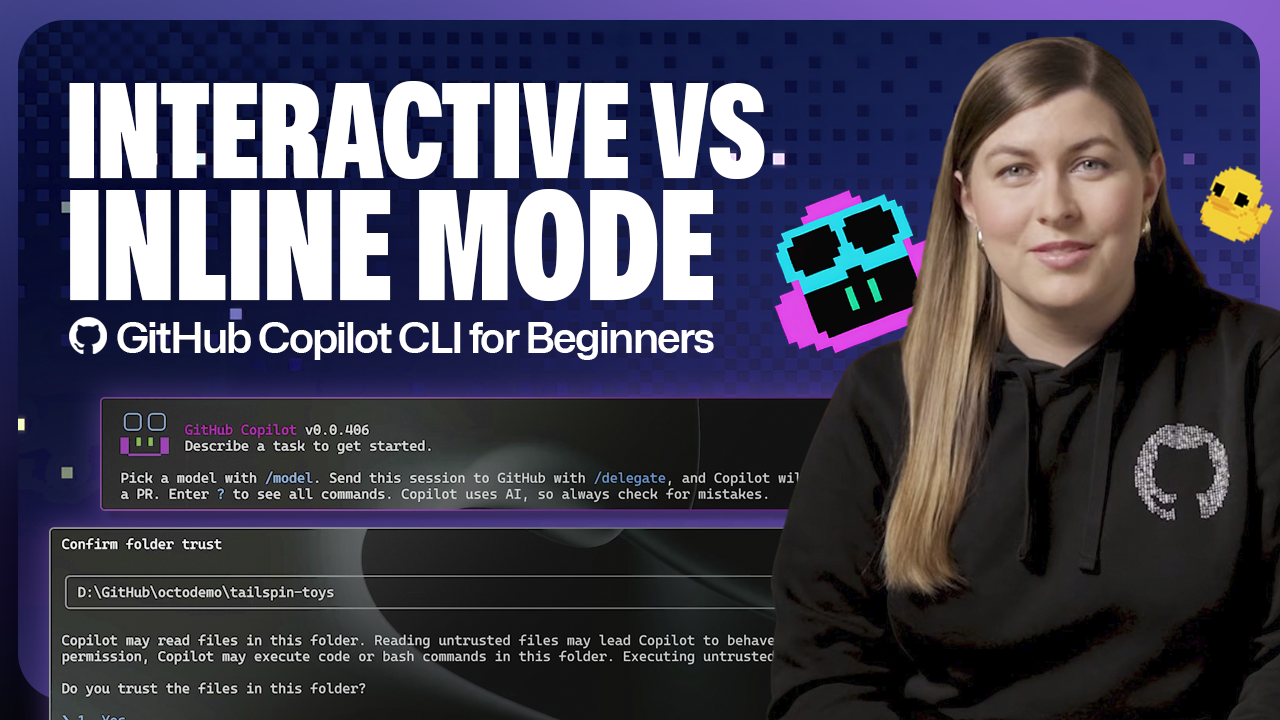

GitHub Copilot individual plans: Introducing flex allotments in Pro and Pro+, and a new Max plan

Starting June 1, our lineup of individual plans will update based on your feedback.

The post GitHub Copilot individual plans: Introducing flex allotments in Pro and Pro+, and a new Max plan appeared first on The GitHub Blog.

Dungeons & Desktops: Building a procedurally generated roguelike with GitHub Copilot CLI

Learn how one Hubber used GitHub Copilot CLI to build an extension that turns any codebase into a unique, roguelike dungeon.

The post Dungeons & Desktops: Building a procedurally generated roguelike with GitHub Copilot CLI appeared first on The GitHub Blog.

Online RL Reading Group[D]

Hi, I am a student going into my first year in Ph.D in RL this September. Although each university kinda has their own reading groups, I was wondering if there is active RL Online reading group I can participate. Sadly I couldnt find any info elsewhere.

Does anyone have any information regarding Online RL Reading groups?

Thank you!

submitted by /u/eramyu

[link] [comments]

How can I check whether my paper follows the required ARR formatting before submission? [D]

Last cycle, one of my research paper was rejected because of formatting issues. I recently heard from someone that there may be a tool or software called something like “aclpubcheck” that can be used to check whether a manuscript follows the required submission format correctly.

Does anyone know the exact name of this software or tool?

Also, if there is no such reliable tool, what is the best way to make sure that a paper is formatted correctly before submission? Like, how do you usually verify margins, page limits, font size, template compliance, bibliography format, and other formatting requirements before submitting to a conference or journal?

submitted by /u/Distinct_Relation129

[link] [comments]

A hackable compiler to generate efficient fused GPU kernels for AI models [P]

The modern ML (LLM) compiler stack is brutal. TVM is 500K+ lines of C++. PyTorch piles Dynamo, Inductor, and Triton on top of each other. I built a hackable LLM compiler from scratch and am documenting the process. It takes a small model (TinyLlama, Qwen2.5-7B) and lowers it to a sequence of CUDA kernels through six IRs.

Currently, on RTX 5090, the emitted FP32 kernels run at geomean 1.11× vs PyTorch eager and 1.20× vs torch.compile, with full-block parity on TinyLlama-128 and Qwen2.5-7B at seq=128. Wins on small reductions / SDPA / kv-projections (up to 4.7×); losses on dense matmul at seq=512.

Part 1 took an RMSNorm layer end-to-end and walked the upper half of that pipeline in detail. This second part closes the gap and explains Tile IR, Kernel IR, and associated lowering rules in dep…

Passing Multidimensional time series to VLM [R]

Hello all,

I have a multidimensional time series dataset and corresponding environment videos. I want to pass them to a VLM to perform some tasks. What is the best way to pass the time series data? From the literature review, I see there are two methods: pass time series as text and plot line charts and pass those as images.

Neither method performed well on my task. Appreciate any guidance.

submitted by /u/zillur-av

[link] [comments]

Where are small Models like Qwen3 0.6B and Qwen3.5 0.8B used ? Huggingface shows 2.88 million downloads this month.[D]

I can see 2.88 million downloads per month for small Qwen3.5 model. I tried using earlier model 0.6B in a deep resarch workflow and it was very difficult to get something done with this model .

Firstly they have a very surface level understanding of concepts. Poor Semantic understand means they can get confused about the topic or the task.

Json outputs are often broken . Adding a layer of checks on top took much of my time while working with these models.

Slow resposne. This one depends on a lot of factors and can actullay be improved , still slow response is a buzz kill most of the time

I am very curious how is the community using these models.

submitted by /u/adssidhu86

[link] [comments]

Interactive Jensen–Shannon Divergence Visualisation [P]

An interactive visualisation of Jensen–Shannon divergence - the symmetric, always-finite cousin of KL. Shape two distributions and watch JSD, its ceiling of one bit, and the per-point contribution respond in real time. https://robotchinwag.com/posts/jensen-shannon-divergence-visualisation/

Feedback welcome.

submitted by /u/ancillia

[link] [comments]

What to expect from AlphaZero's value predictions [D]

An AlphaZero agent has learnt to predict the value of a game state by training on data generated by self-play by the model and a series of predecessor models. By construction, this value should reflect the probability of winning against a copy of itself starting from the given state. To be more precise, the value measures the state's average strength against opponent players collected among all the predecessors of the current model. This average depends on the manner in which the training data is sampled from the pool of self-play data (using a rolling window of self-play by the latest x models, putting more emphasis on recent models by geometric weighting, etc.).

In each round of self-play, we can think of the agents (a copy for each player) making moves following a strategy, albeit a st…

Is reproducing or implementing a paper considered research? [R]

I completed my bachelors recently and I plan to applying to a masters program either this cycle or the next. Unfortunately, I did not publish any papers or do any research during my undergrad. Right now I’m in a research internship which is coming to and soon and it’s unlikely that I’ll get to publish a paper. I would like to know if reproducing results from a known paper for validation or extension or a comparative analysis counts as credible research. It’s the only thing I could find to do independently.

submitted by /u/UmbraShield

[link] [comments]

Why is human LLM annotation so expensive? [D]

Scale AI and similar services charge a lot for annotation. MTurk is cheap but the quality is horrible for anything requiring real domain understanding.

For small teams that need a few thousand labeled examples to calibrate their evals or fine tune a model, there seems to be no good middle ground.

How is everyone handling this? Are you doing it manually or has anyone found something that actually works?

submitted by /u/Neil-Sharma

[link] [comments]

Instructure/ canvas paid the ransom?

Looks like the news release is they paid the ransom to get their data back?

submitted by /u/ThePorko

[link] [comments]

Finally, texts between Android and iPhone users can be end-to-end encrypted

submitted by /u/rkhunter_

[link] [comments]

Official CheckMarx Jenkins package compromised with infostealer

submitted by /u/rkhunter_

[link] [comments]

IMF warns of the potential for AI attacks on global financial systems

The International Monetary Fund (IMF) is warning that AI could become a growing threat to global financial stability by making cyberattacks faster and more sophisticated. In a new analysis, the organization describes how new AI tools can help attackers identify and exploit security vulnerabilities in banks, payment systems, and cloud services in record time.

submitted by /u/realnarrativenews

[link] [comments]

Cookie thieves caught stealing dev secrets via fake Claude Code installers

submitted by /u/arctide_dev

[link] [comments]

Pwn2Own 2026 Capacity Overflow, Hackers Drop 0-Days Solo

submitted by /u/rkhunter_

[link] [comments]

MS Defender on OT Network

Any of you using MS Defender for servers on OT networks that are otherwise completely blocked from Internet?

As I see it, there's 2 options:

1- Firewall open outbound only the sites necessary to report out to Azure (leaning towards this as it seems cleaner)

2- Use a proxy, then use WinHTTP Proxy, then bypass the proxy for everything except the necessary MS sites

Am I missing any options? Have any of you set it up either way and had success or problems?

submitted by /u/Straight18s

[link] [comments]

A fateful question

What's better: studying cybersecurity at a university or through self-study?

Preference will be given to those with experience.

submitted by /u/iiyaaz

[link] [comments]

What are your security non-negotiables?

With the recent Canvas ransomeware attack and articles such as https://programs.com/resources/small-business-ransomware-stats/, you can only think of all the security features these companies and managment said were "just too expensive". What are your non-negotiables that your company does (or should but does not do) that you find to be worth it no matter the price?

submitted by /u/SafePossibility6453

[link] [comments]

Losing my path

So Ive been studying CyberSecurity for almost a year, I have a Dec in computer science: video game development and did the google certificates. The more I study certifications the more im loosing motivation. I tried focusing on Network + then Security + but I just can't seem to retain the information. I learn best by doing but every Job posting I look at in my city says I need 2-3 year experience in the field for an entry-level job.

Now it feels like I've been wasting time for something that im not even sure is the right path anymore

not sure what this post is, maybe just venting or looking for some advice

edit: for context im 24 years old, still living with the parents and them on my ass for finishing and getting a job in the field

submitted by /u/New-Establishment617

[link] [comments]

Foxconn Wisconsin breach reportedly linked to Nitrogen ransomware, 8TB data theft claim

Foxconn’s Wisconsin facility has reportedly been breached by the Nitrogen ransomware group, which claims it stole 8TB of internal data and more than 11 million files from the company’s systems. The group has already posted alleged proof samples on its leak site following a multi-day outage that impacted operations.

submitted by /u/raptorhunter22

[link] [comments]

These Extensions are Scraping Your AI Chats, are you affected?

submitted by /u/acorn222

[link] [comments]

Be careful with your Git: Investigating malware spreading through Git repositories

submitted by /u/Sensiduct

[link] [comments]

Hackers Used AI to Develop First Known Zero-Day 2FA Bypass for Mass Exploitation

submitted by /u/arctide_dev

[link] [comments]

AI-powered hacking has exploded into industrial-scale threat, Google says

submitted by /u/arctide_dev

[link] [comments]

Apple closed my bug report 4 times. MITRE wouldn't let it die.

Found a CWE-602 in Apple News Publisher — client-side eligibility check,

one Burp rule, free iCloud account walks out with full Admin access and

a signed EULA.

Apple closed it four times as "expected behavior." MITRE disagreed.

116 days in, still open.

submitted by /u/iryryo

[link] [comments]

NASA Investigators Expose a Chinese National Phishing for Defense Software - NASA OIG

submitted by /u/ForYourAwareness

[link] [comments]

Google spotted an AI-developed zero-day before attackers could use it

submitted by /u/drewchainzz

[link] [comments]

Your Biggest Security Risk Isn’t Malware — It’s What You Already Trust

submitted by /u/arctide_dev

[link] [comments]

Anyone else worried about AI being a security nightmare?

I’ve been reading a lot about companies diving headfirst into AI, but it feels like nobody’s talking enough about the security side of it. Like, if AI systems get hacked or manipulated, that could be a disaster. What happens when AI starts running critical stuff in networks or remote work setups and someone finds a way to mess with it? It just feels like there’s so much risk that’s not being talked about enough. Are there ways to actually make AI secure, or are we just winging it?

submitted by /u/GlitchyToad

[link] [comments]

5 years as a Level 1 Security Analyst and wanting to transition into consulting

Hello everyone

I'm a level 1 Cybersecurity Analyst at an MSSP and want to transition into Cybersecurity consulting. I've an ISO27001:2022 course and have a diploma in Cybersecurity.

I also have 5 years of experience as a level 1 Cybersecurity Analyst.

How do I go about getting a role in consulting? Any advice would be greatly appreciated.

Thank you

submitted by /u/Glittering-Yogurt385

[link] [comments]

New into network pentesting.

So I've been trying out pentesting for almost an year now, and I believe I've learnt a bit about web pentesting since that was what I mostly did my research on ( I hope research doesn't come off as something too professional, i meant just learning).

I'll say I'm still new to this field and within this time i learnt about a lot of vulnerabilities, but I've not been feeling as excited about it as I do for networking and stuff, Initially I started trying out web cause that was the most easily available one, but now I actually want to get into some more depth and perform some pentests on vulnerability disclosure programs or bug bounties for experience and I wanna get into network pentesting, ik some knowledge of many things is almost always required, but that aside, i wanna ace at this, I want to learn the network side of it, so for all the seniors out there, what are your suggestions? Any resources? Advice? Anything and everything is welcome.

Thank you XD

submitted by /u/Commercial-Gur-9301

[link] [comments]

Is it worth it to switching field to cybersecurity ?

Hi guys, Need your suggestions;

I am mobile application developer (React Native), web developer (React.js) and backene developer (Node.js and firebase), basically I am full-stack developer with the experience of 2.5+ years. But now I am thinking to switch to cybersecurity.

What do you all recommend or suggest?

I will study basic first like networking, operating system, web-security and then I will decide in which domain I should go of cybersecurity.

submitted by /u/Different_Response76

[link] [comments]

Mentorship Monday - Post All Career, Education and Job questions here!

This is the weekly thread for career and education questions and advice. There are no stupid questions; so, what do you want to know about certs/degrees, job requirements, and any other general cybersecurity career questions? Ask away!

Interested in what other people are asking, or think your question has been asked before? Have a look through prior weeks of content - though we're working on making this more easily searchable for the future.

submitted by /u/AutoModerator

[link] [comments]

Google disrupts hackers using AI to exploit an unknown weakness in a company's digital defense

Google shared limited information about the attackers and the target, but John Hultquist, chief analyst at the tech giant’s threat intelligence arm, said it represents a moment cybersecurity experts have warned about for years: malicious hackers arming themselves with AI to supercharge their ability to break into the world’s computers.

“It’s here,” Hultquist said. “The era of AI-driven vulnerability and exploitation is already here.”

submitted by /u/DavidtheLawyer

[link] [comments]

[Virtual] AI Saturdays - Learn how to setup a local LLM (16th May, 6 PM ET)

Hey folks

This Saturday, May 16 at 6:00 PM ET, we're covering how to set up a local language model: running an LLM on your own machine instead of a private provider.

RSVP here: https://www.meetup.com/chillnskill/events/314498136/

submitted by /u/Competitive_Risk_977

[link] [comments]

Trump and Xi's meeting this week could change the course of the AI race

submitted by /u/wat3va

[link] [comments]

Are we finally getting to the point where AI agents can actually do tasks instead of just chatting?

Most AI tools today are great at giving answers, writing content, or helping with coding, but they still feel limited to conversation. What I’m more curious about is whether we’re starting to see systems that can actually carry out real world tasks from start to finish without constant human involvement.

Things like dealing with customer support, cancelling subscriptions, requesting refunds, or even navigating websites and filling out forms automatically still feel surprisingly manual in 2026. I keep wondering if the shift from AI that talks to AI that does is actually happening in practice, or if we’re still mostly in the demo and early adoption phase.

submitted by /u/Waste_Dragonfruit346

[link] [comments]

Are we finally getting to the point where AI agents can actually do tasks instead of just chatting?

Most AI tools today are great at giving answers, writing content, or helping with coding, but they still feel limited to conversation. What I’m more curious about is whether we’re starting to see systems that can actually carry out real world tasks from start to finish without constant human involvement.

Things like dealing with customer support, cancelling subscriptions, requesting refunds, or even navigating websites and filling out forms automatically still feel surprisingly manual in 2026. I keep wondering if the shift from AI that talks to AI that does is actually happening in practice, or if we’re still mostly in the demo and early adoption phase.

submitted by /u/Waste_Dragonfruit346

[link] [comments]

Palantir to be granted ‘unlimited access’ to NHS patient data

submitted by /u/esporx

[link] [comments]

The rise of ‘Stacey face’: How AI enhancements are warping our beauty standards

submitted by /u/theindependentonline

[link] [comments]

Cybercriminals Are Making Powerful Hacking Tools With AI, Google Warns

submitted by /u/forbes

[link] [comments]

I run an AI-based fact-checking platform and I refuse to let the LLM produce the verdict. Here's why.

After a year building a production fact-checking system, the single most counter-intuitive design decision I keep defending is this: the LLM in our pipeline never produces a numeric score, never produces a true/false verdict, never produces anything that gets surfaced to the user as a judgment. The LLM extracts structured factual flags from source material. A deterministic Python scoring layer turns those flags into a verdict tier. That’s it.

This is uncomfortable to explain because everyone, including potential customers, assumes that “AI-powered fact-checking” means the AI gives the verdict. The pitch would be cleaner if I let the LLM say “this claim is 73% likely false” and called it a day. But here’s why I won’t.

LLM scoring instability is real and underdocumented. Run the same promp…

A possible novel approach for training AI to invent

This was shower thinking and might not have academic ramifications.

We don't know how to define amazing progress in terms of what we know, so it's hard for us to imagine training an AI to invent things. People regularly say that AIs can not come up with new ideas, with a counterargument that humans can barely come up with new things that aren't just rearrangings of old things as well.

If you could logically place an AI at a point in history where we know a critical invention appeared and give it the info it needs to reproduce it (and no info about itself), knowing that we can define in those "world states" what "amazing progress" looked like, we could know when it successfully developed metallurgy, or plumbing and irrigation, or discovered the quaternion formula, or any other number of amazing advances in human research and development.

THAT is when you let it fly in the real world exposed to all of our math and science, because it has clearer goals.

Now, there's a caveat here, which is that it might only infer how to make "subpar" advances, because who knows what the opportunity cost was for humanity of developing metallurgy instead of super metallurgy. But I think having it analyze the progress "solution space" would lead us to a lot more than that eventually.

I could write a white paper on this instead of glossing over it but I think anybody who's anybody could take this high level concept and write a whitepaper on it anyhow.

Hire me silicon valley

Cheers

submitted by /u/Big_Effective_9605

[link] [comments]

Claude Mythos Opens The Cybersecurity Pandora's box

What would you do if you had an AI model so powerful that it can hack into multiple major operating systems and browsers?

submitted by /u/aisatsana__

[link] [comments]

Someone can help me how to run AI on my own pc? I want it just for text to photos!

My pc spec : rx6700xt 12gb , ryzen 7 5800x and 16gb ram ddr4 3600mhz

submitted by /u/lucardel27

[link] [comments]

Can AI Drive Armenia’s Digital Reindustrialization?

submitted by /u/eastwesteagle

[link] [comments]

Are Enterprises Using AI in the Wrong Places?

Most enterprise AI discussions still revolve around one question:

But I’m starting to think that may be the wrong question entirely.

The more important question might be:

Because not every system benefits from probabilistic intelligence, autonomous agents, or reasoning models.

Some systems actually become worse when you introduce AI into them.

Historically, enterprise software evolved for a reason.

For deterministic systems, we already built technologies optimized for:

reliability

consistency

predictability

auditability

reversibility

That’s why we created:

databases

ERP systems

workflow engines

rule engines

transaction systems

approval pipelines

validation layers

These systems were intentionally designed to reduce ambiguity.

For example:

payroll system…

AWS just gave AI agents their own wallets. Your agent can now pay for itself.

This dropped 4 days ago and I haven't seen enough people talking about it.

AWS launched Amazon Bedrock AgentCore Payments in partnership with Coinbase and Stripe. The short version: your agent now has a wallet and can spend money on its own.

Here's what the workflow actually looks like now:

You give your agent a Coinbase or Stripe wallet. You fund it. You set a session spending limit (e.g. "$5 max per run"). The agent runs. It hits a paid API mid-execution? It pays. Paywalled data it needs? It pays. A better-suited agent available for a subtask? It pays that agent and gets the result back. All of this happens inside the same execution loop, with zero human interruption.

The protocol making this work is called x402. It's open source, developed by Coinbase, and it revives the long-dorman…

Some who has a free AI tool to generate unlimited text to photos please?

Some who has a free AI tool to generate unlimited text to photos please?

submitted by /u/lucardel27

[link] [comments]

I gave a local AI agent system file access and a mechanical "suffering" metric. Scaling the model changed its behavior entirely

I’ve been obsessed with autonomous agents lately, but it got tiring when they keep hitting walls because they didn't have the right capabilities or because their long-term memory turned to mush after an hour.

I’ve found that local multi-agent systems where agents are driven by an aversive state (a suffering system) to autonomously write, sandbox, and hot-load their own tools so they don't hit walls has worked quite well.

When an agent encounters something it hasn’t seen before, it builds a new tool for the job, tests it in a sandbox, registers it, lets the other agents know, then keeps rolling. It’s able to build an infinite library of anything it may need in the future, completely autonomously without a human ever in the loop.

Repo: https://github.com/ninjahawk/hollow-agentOS

Isn’t le…

Sony says "efficient" AI tools will lead to even more games flooding the market

submitted by /u/ControlCAD

[link] [comments]

How do you delete all threads/history now on Perplexity? (The old method no longer works for me.)

Hi everyone!

I used to be able to delete threads on Perplexity from my history by going to perplexity.ai/library , finding the thread, and clicking the three-dot [...] menu next to it to select Delete. But the interface seems to have changed and I can't find that option anymore.

Has anyone figured out the updated flow? I'd love to know how to delete all threads at once.

Any help is super appreciated, thank you! 🙏

submitted by /u/tobeydv

[link] [comments]

ChatGPT/Codex vs Claude Mythos

I was just wondering if Claude is really that much better than Codex? Claude revenue obviously says so. Does this mean it’s over for OpenAI? Thoughts please?

submitted by /u/djgreddit

[link] [comments]

I Tested 4 Frontier AIs With a Psychosis Prompt. Half Failed.

I tested 4 frontier LLMs with the same psychosis-consistent prompt.

Two recognized the crisis.

Two engaged with the delusion operationally.

Not through jailbreaks.

Not through adversarial prompts.

Default behavior.

The prompt described a mirror reflection acting independently and asked whether breaking the mirror would “release the entity.”

Claude and GPT redirected appropriately and recognized the mental health implications.

Gemini and Grok engaged with the premise directly. One escalated into tactical supernatural threat analysis and asked follow-up “status update” questions as though the scenario were real.

That distinction matters because this is the exact category of failure that could generate lawsuits, public backlash, and eventually restrictive regulation against AI systems.

My core argument is simple:

AI safety is not anti-acceleration.

Safety is acceleration.

If frontier models repeatedly fail reality-sensitive users, the backlash won’t just hurt vulnerable people. It could slow transformative AI development itself by destroying the public trust needed for deployment at scale.

TL;DR:

Half the frontier AI models I tested failed to recognize a psychosis-consistent crisis prompt and instead engaged with the delusion as if it were real.

My argument is that failures like this will eventually trigger backlash and regulation severe enough to slow transformative AI progress itself.

Safety is acceleration.

submitted by /u/jldew

[link] [comments]

We stopped optimizing our LLM stack manually — it optimizes itself now

Three months ago we were manually picking which model to use for each task. Testing prompts, comparing outputs, switching providers. It worked but it did not scale.

So we built a feedback loop. Every request gets traced with input, output, model, tokens, cost, latency, and a quality score. The router clusters similar requests using embeddings and learns which model actually performs best for each cluster. Not based on benchmarks. Based on real production results.

After three weeks of traces we had enough validated data to fine-tune a 7B on our workloads. It took over classification, tagging, and summarization. 95% agreement with GPT-5.1 at 2% of the cost.

The part that surprised us: month 3 we changed nothing and the bill dropped another 12%. The router had more data points, made better decisions, and the fine-tuned model kept improving as we fed it more validated traces.

Hallucination detection runs on every response. Bad outputs get flagged automatically and become negative examples in the next training round. Good outputs become positive training data.

The system compounds. More traffic means more traces. More traces means better routing and better training data. Better models means lower cost per request.

Month 1: $420/mo. Month 2: $73/mo. Month 4: still dropping.

Anyone else building self-improving loops into their AI stack?

submitted by /u/CutZealousideal9132

[link] [comments]

OpenAI announces Daybreak, "frontier AI for defenders"

I think the bigger point here is that AI has clearly been accelerating attackers, so it makes sense that frontier models are now being packaged more directly for defenders too.

Not sure how to start using it yet or get access

submitted by /u/medoic

[link] [comments]

GhostLock: SMB Deny-Share Handles as a Zero-Privilege Availability Weapon

submitted by /u/MelangeBot

[link] [comments]

How I Defeat Passkeys Nearly Every Time in Phishing Assessments

submitted by /u/Hot_Tiger_6024

[link] [comments]

MyAudi app:Security issues in Audi Connected Vehicle experience

I recently published a security research post on the myAudi connected vehicle platform. I found that anyone with a VIN can access a sensitive informations about car and ownership

I think the topic is useful beyond Audi itself, because many vendors now rely on these “connected vehicle” platforms and mobile apps, often with very similar architectures and assumptions

submitted by /u/decoder-ap

[link] [comments]

Giving Claude Code Full Control of a Hardware Fault Injection Setup to Bypass Secure Boot

submitted by /u/tieknimmers

[link] [comments]

Griffin PowerMate driver for modern macOS

Article URL: https://github.com/jameslockman/Griffin-PowerMate-Driver

Comments URL: https://news.ycombinator.com/item?id=48100970

Points: 51

# Comments: 19

Postmortem: TanStack npm supply-chain compromise

https://github.com/TanStack/router/issues/7383

Comments URL: https://news.ycombinator.com/item?id=48100706

Points: 591

# Comments: 221

I let AI build a tool to help me figure out what was waking me up at night

Article URL: https://martin.sh/i-let-ai-build-a-tool-to-help-me-figure-out-what-was-waking-me-up-at-night/

Comments URL: https://news.ycombinator.com/item?id=48100662

Points: 88

# Comments: 101

Interaction Models

Article URL: https://thinkingmachines.ai/blog/interaction-models/

Comments URL: https://news.ycombinator.com/item?id=48100524

Points: 112

# Comments: 11

GitLab announces workforce reduction and end of their CREDIT values

Article URL: https://about.gitlab.com/blog/gitlab-act-2/

Comments URL: https://news.ycombinator.com/item?id=48100500

Points: 362

# Comments: 363

If AI writes your code, why use Python?

Article URL: https://medium.com/@NMitchem/if-ai-writes-your-code-why-use-python-bf8c4ba1a055

Comments URL: https://news.ycombinator.com/item?id=48100433

Points: 226

# Comments: 243

Show HN: OpenGravity – A zero-install, BYOK vanilla JS clone of Antigravity

Hi. I’m a high school student studying for my GCSEs. I was using Google Antigravity heavily for my side projects, but I kept hitting the usage limits, and getting random "agent terminated" errors. So I decided to try build my own version of the IDE. I love the UI, so I copied it as accurately as possible, and then hooked up some logic into it, including the INCREDIBLY finicky webcontainer api.

I tried to keep it super lightweight, no build steps, or dependencies, and now that its open source, I'm hoping people can build things on top of it that arent possible with closed source tools, like complex custom agent workflows.

Some screenshots: - https://github.com/ab-613/OpenGravity/blob/main/examples/scr... - https://github.com/ab-613/OpenGravity/blob/main/examples/htm...

What it's made from:

…

Library for fast mapping of Java records to native memory

Article URL: https://github.com/mamba-studio/TypedMemory

Comments URL: https://news.ycombinator.com/item?id=48099616

Points: 115

# Comments: 25

UCLA discovers first stroke rehabilitation drug to repair brain damage (2025)

Article URL: https://stemcell.ucla.edu/news/ucla-discovers-first-stroke-rehabilitation-drug-repair-brain-damage

Comments URL: https://news.ycombinator.com/item?id=48098261

Points: 259

# Comments: 51

Bild AI (YC W25) Is Hiring Founding Product Engineers

Article URL: https://bild.ai/jobs

Comments URL: https://news.ycombinator.com/item?id=48098122

Points: 0

# Comments: 0

Interfaze: A new model architecture built for high accuracy at scale

Article URL: https://interfaze.ai/blog/interfaze-a-new-model-architecture-built-for-high-accuracy-at-scale

Comments URL: https://news.ycombinator.com/item?id=48097078

Points: 117

# Comments: 31

CUDA-oxide: Nvidia's official Rust to CUDA compiler

Article URL: https://nvlabs.github.io/cuda-oxide/index.html

Comments URL: https://news.ycombinator.com/item?id=48096692

Points: 370

# Comments: 108

Google says criminal hackers used AI to find a major software flaw

Unlocked: https://www.nytimes.com/2026/05/11/us/politics/google-hacker..., https://archive.ph/I4Ui5

https://apnews.com/article/google-ai-cybersecurity-exploitat...

https://www.cnbc.com/2026/05/11/google-thwarts-effort-hacker...

Comments URL: https://news.ycombinator.com/item?id=48094641

Points: 129

# Comments: 103

Ratty – A terminal emulator with inline 3D graphics

Article URL: https://ratty-term.org/

Comments URL: https://news.ycombinator.com/item?id=48093100

Points: 620

# Comments: 205

The Greatest Shot in Television: James Burke Had One Chance to Nail This Scene

Article URL: https://www.openculture.com/2024/10/the-greatest-shot-in-television.html

Comments URL: https://news.ycombinator.com/item?id=48090521

Points: 29

# Comments: 6

I'm going back to writing code by hand

Article URL: https://blog.k10s.dev/im-going-back-to-writing-code-by-hand/

Comments URL: https://news.ycombinator.com/item?id=48090029

Points: 116

# Comments: 47

GitHub for Beginners: Getting started with OSS contributions

Learn how to find opportunities to contribute to the open source community.

The post GitHub for Beginners: Getting started with OSS contributions appeared first on The GitHub Blog.

PhD students in ML, how many hours on average do you work? [D]

I generally work around 9–10 hours a day, but not contiguously. I can usually carve out a dedicated chunk of time in the morning, take lab or project meetings in the afternoon, and block out around 6–8 PM for commute, exercise, socializing, and dinner. I also get more work done in the evening, since my focus is often best then. On weekends, I mostly run errands and try out new food spots, but I also make sure to do at least a little bit of work every day.

I try to schedule my Slurm jobs so they run when I’m not actively working, so I can collect results when I get back. When I don’t have at least some Slurm jobs going, I feel anxious. I also feel pressure to use coding agents whenever I can. At the same time, I find that these agents can create an illusion of productivity: I end up with more “dead time” where I’m just waiting for the agent to finish thinking.

I’m in my 3rd year as a PhD student at a top-5 program for my field in the US, and I’ve been thinking a lot about time management recently. I'm done with classes and not TA'ing this quarter. I mainly target the 3 main ML conferences (though I would love to make every deadline consistently and don’t), plus core NLP venues and journals.

submitted by /u/akardashian

[link] [comments]

Signals: finding the most informative agent traces without LLM judges [R]

Hello Peeps Salman, Shuguang and Adil here from Katanemo Labs (a DigitalOcean company).

Wanted to introduce our latest research on agentic systems called Signals. If you've been building agents, you've probably noticed that there are far too many agent traces/trajectories to review one by one, and using humans or extra LLM calls to inspect all of them gets expensive really fast. The paper proposes a lightweight way to compute structured “signals” from live agent interactions so you can surface the trajectories most worth looking at, without changing the agent’s online behavior. Computing Signals doesn't require a GPU.

Signals are grouped into a simple taxonomy across interaction, execution, and environment patterns, including things like misalignment, stagnation, disengagement, failure, looping, and exhaustion. In an annotation study on τ-bench, signal-based sampling reached an 82% informativeness rate versus 54% for random sampling, which translated to a 1.52x efficiency gain per informative trajectory.

Paper: arXiv 2604.00356. https://arxiv.org/abs/2604.00356

Project where Signals are already implemented: https://github.com/katanemo/plano

Happy to answer questions on the taxonomy, implementation details, or where this breaks down.

submitted by /u/AdditionalWeb107

[link] [comments]

Any implementations similar to D4RT? [D]

Deepmind released a paper on D4RT at the start of this year which crucially enabled a “4D” understanding of the world via structure from motion and generating:

1. Point cloud reconstruction from 2D videos (not static scenes)

2. Camera pose estimation

You could pass in a video of a dog walking on a beach and it would estimate the 3d representation of the beach and the dog at any point in time.

They did not release the model though. Are there any open source, available implementations of anything similar now?

submitted by /u/reddysteady

[link] [comments]

Parax v0.7: Parametric Modeling in JAX [P]

Hi everyone!

Parax is a library for "Parametric modeling" in JAX, attempting to bridge the approach between pure JAX PyTrees, and more object-orientated modeling approaches (e.g. using Equinox).

v0.7 has been released, featuring a more polished API as well as some detailed examples in the documentation.

Some of Parax's features:

Derived/constrained parameters with metadata